Visual Studio 配置 OpenCv

我配置過程中debug出問題按這位博主的方法解決的

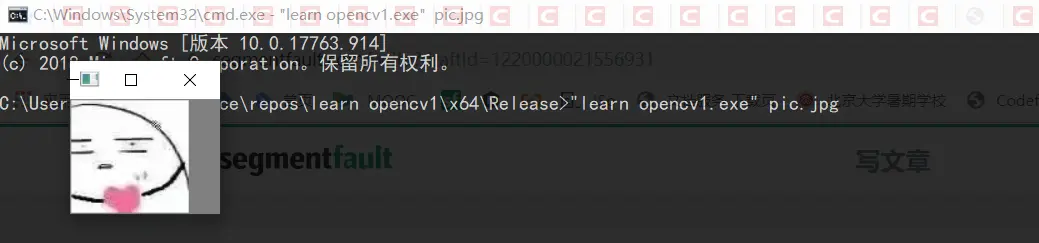

加載圖片

Example2-2

代碼如下

#include<opencv2/opencv.hpp>

int main(int argc, char** argv) {

cv::Mat img = cv::imread(argv[1], -1);

if (img.empty()) {

return -1;

}

cv::namedWindow("Example1", cv::WINDOW_AUTOSIZE);

cv::imshow("Example1", img);

cv::waitKey(0);

cv::destroyWindow("Example1");

return 0;

}在 cv 命名空間下,等價於以下寫法

#include<opencv2/opencv.hpp>

using namespace cv;

int main(int argc, char** argv) {

Mat img = imread(argv[1], -1);

if (img.empty()) {

return -1;

}

namedWindow("Example1", WINDOW_AUTOSIZE);

imshow("Example1", img);

waitKey(0);

destroyWindow("Example1");

return 0;

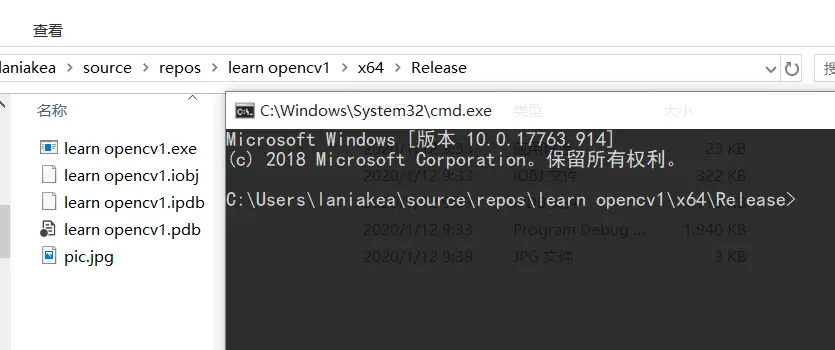

}然後就可以運行了,上面貼出的網頁博主只設置了debug,因為我的代碼是按照learning OpenCV3中來的,裏面加載圖片是在命令框中進行的,如果是在window下,還要再設置一下release,和配debug過程一樣的。

找到有exe文件的對應文件夾,執行

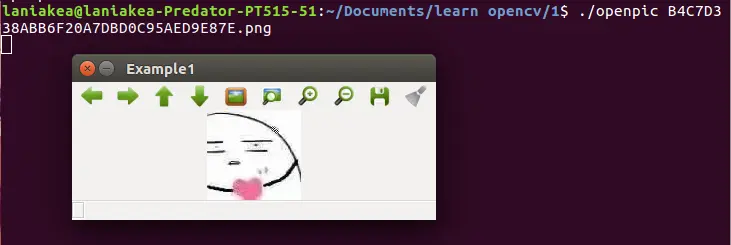

現在我再去Ubuntu裏試一遍,順便使用一下吃灰很久的cmake

先創建一個.cpp文件,我的叫openpic

然後再寫一個CMakeLists.txt

內容如下

cmake_minimum_required (VERSION 3.5.1)

project(openpic)

find_package( OpenCV REQUIRED )

add_executable(openpic openpic.cpp)

target_link_libraries( openpic ${OpenCV_LIBS} )然後再命令框中依次執行

cmake .make加載視頻

Example2-3

#include "opencv2/highgui/highgui.hpp"

#include "opencv2/imgproc/imgproc.hpp"

int main( int argc, char** argv ) {

cv::namedWindow( "Example3", cv::WINDOW_AUTOSIZE );

cv::VideoCapture cap;

cap.open( std::string(argv[1]) );

//string(argv[1])視頻路徑+名稱

cv::Mat frame;

for(;;) {

cap >> frame;

//逐幀讀取,直到讀完所有幀

if( frame.empty() ) break; // Ran out of film

cv::imshow( "Example3", frame );

if( cv::waitKey(33) >= 0 ) break;//每幀展示33毫秒

}

return 0;

}CMakeCache.txt

cmake_minimum_required (VERSION 3.5.1)

project(openvideo)

find_package( OpenCV REQUIRED )

add_executable(openvideo openvideo.cpp)

target_link_libraries( openvideo ${OpenCV_LIBS} )cmake .make./openvideo red.avi

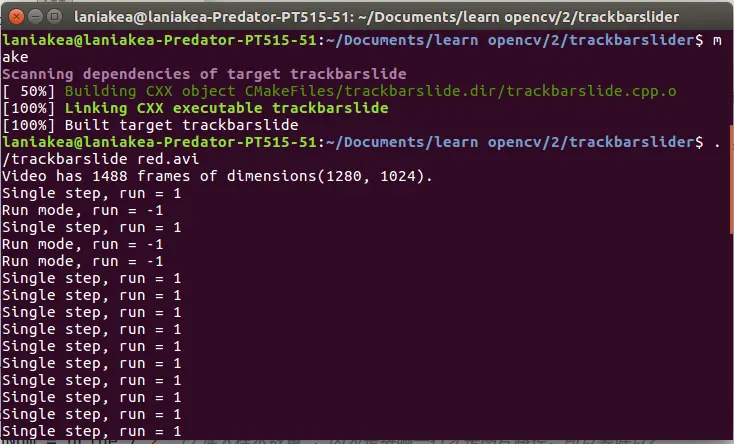

給視頻加操作

- 加一個進度條

slider trackbar - 按S 單步調試-暫停

- 按R 開始

- 每當我們進行進度調整操作時,執行S(暫停)

Example2-4

#include "opencv2/highgui/highgui.hpp"

#include "opencv2/imgproc/imgproc.hpp"

#include <iostream>

#include <fstream>

using namespace std;

//有習慣使用g_表示全局變量

int g_slider_position = 0;

int g_run = 1;

//只要其不為零就顯示新的幀

int g_dontset = 0; //start out in single step mode

cv::VideoCapture g_cap;

void onTrackbarSlide(int pos, void *) {

g_cap.set(cv::CAP_PROP_POS_FRAMES, pos);

if (!g_dontset){//被用户進行了操作

g_run = 1;

}

g_dontset = 0;

}

int main(int argc, char** argv) {

cv::namedWindow("Example2_4", cv::WINDOW_AUTOSIZE);

g_cap.open(string(argv[1]));

int frames = (int)g_cap.get(cv::CAP_PROP_FRAME_COUNT);//第幾幀

int tmpw = (int)g_cap.get(cv::CAP_PROP_FRAME_WIDTH);//長

int tmph = (int)g_cap.get(cv::CAP_PROP_FRAME_HEIGHT);//高

cout << "Video has " << frames << " frames of dimensions("

<< tmpw << ", " << tmph << ")." << endl;

//calibrate the slider trackbar(校準滑塊)

cv::createTrackbar("Position"//進度條標籤

, "Example2_4"//放這個標籤窗口的名稱

, &g_slider_position//一個和滑塊綁定的變量

, frames//進度最大值(視頻總幀數)

,onTrackbarSlide//回調?(如果不想要,可設置為0)

);

cv::Mat frame;

for (;;) {

if (g_run != 0) {

g_cap >> frame; if (frame.empty()) break;

int current_pos = (int)g_cap.get(cv::CAP_PROP_POS_FRAMES);

g_dontset = 1;

cv::setTrackbarPos("Position", "Example2_4", current_pos);

cv::imshow("Example2_4", frame);

g_run -= 1;

}

char c = (char)cv::waitKey(10);

if (c == 's') // single step

{

g_run = 1; cout << "Single step, run = " << g_run << endl;

}

if (c == 'r') // run mode

{

g_run = -1; cout << "Run mode, run = " << g_run << endl;

}

if (c == 27)

break;

}

return(0);

}增加一個全局變量表示進度條的位置

增加一個信號來更新這個變量並定位到需要的位置

當用進度條調整進度時,即設置g_run =1

功能需求:隨着視頻向前(as the video advances),進度條的位置也向前

我們使用一個函數來避免(triggering single-step mode)觸發單步模式

(callback routine)回調例程

void onTrackbarSlide(int pos, void *)

(passed a 32-bit integer)傳遞一個32位的整數pos

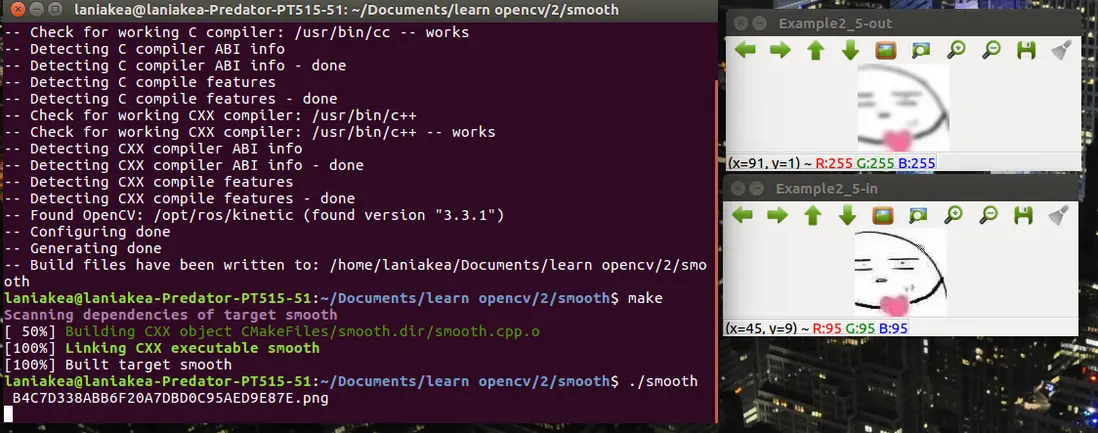

簡單轉換 平滑處理

Many basic vision tasks involve the application of filters to a video stream

很多視頻將濾鏡應用於視頻流

稍後我們將將視頻的每幀都加以處理

smoothing an image 平滑處理

Example2-5

通過如高斯形式的卷積 或 類似函數 來減少圖片中的信息

#include <opencv2/opencv.hpp>

int main(int argc, char** argv) {

cv::Mat image = cv::imread(argv[1], -1);

// Create some windows to show the input

// and output images in.

//

cv::namedWindow("Example2_5-in", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Example2_5-out", cv::WINDOW_AUTOSIZE);

// Create a window to show our input image

//

cv::imshow("Example2_5-in", image);

// Create an image to hold the smoothed output

//

cv::Mat out;

// Do the smoothing

// ( Note: Could use GaussianBlur(), blur(), medianBlur() or bilateralFilter(). )

//

cv::GaussianBlur(image, out, cv::Size(5, 5), 3, 3);

//blurred by a 5 × 5 Gaussian convolution filter

//Gaussian kernel should always be given in odd numbers

//input : image ; written to out

cv::GaussianBlur(out, out, cv::Size(5, 5), 3, 3);

// Show the smoothed image in the output window

//

cv::imshow("Example2_5-out", out);

// Wait for the user to hit a key, windows will self destruct

//

cv::waitKey(0);

return 0;

}略複雜一點的

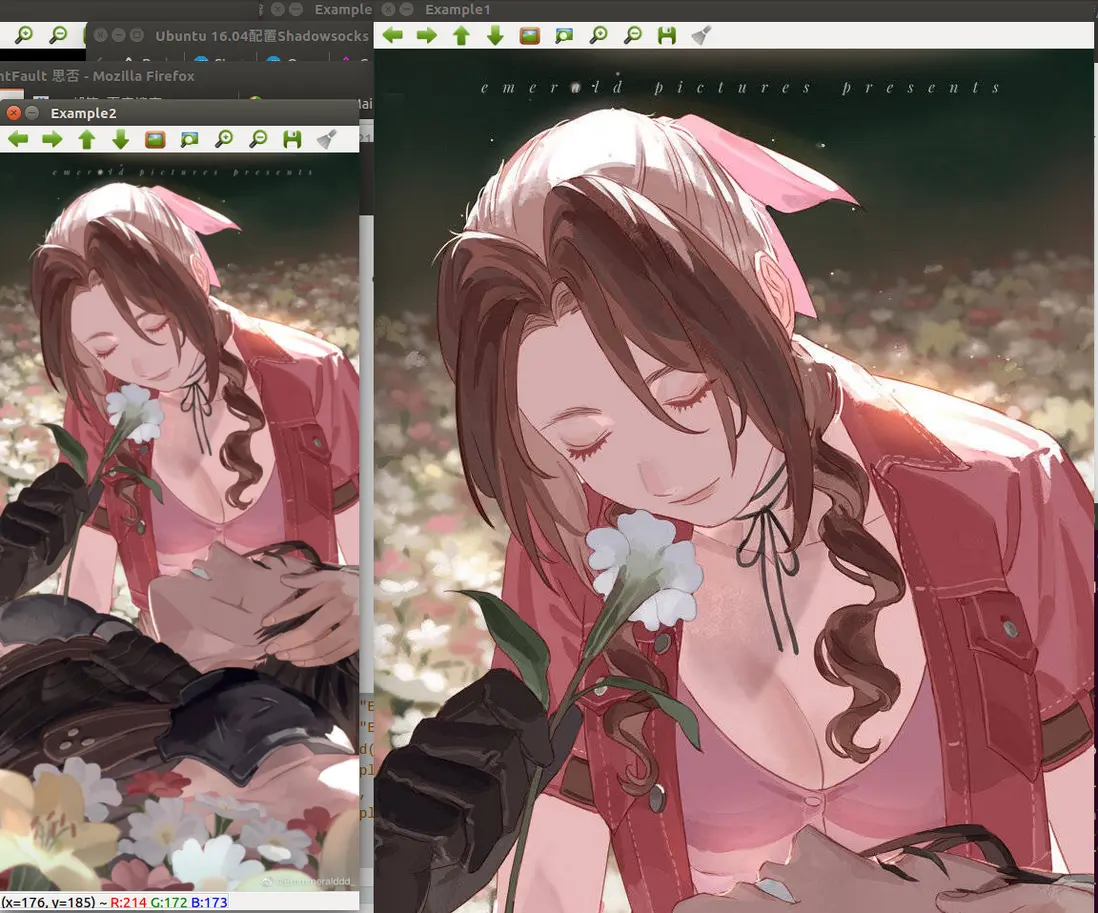

uses Gaussian blurring to downsample an image by a factor of 2

下采樣 -- 縮小圖像

1、使得圖像符合顯示區域的大小;

2、生成對應圖像的縮略圖

scale space(image pyramid)尺度空間

信號處理 和 Nyquist-Shannon Sampling Theorem

下采樣一個信號

convolving with a series of delta functions

與一系列增量函數卷積

high-pass filter 高通濾波器

band-limit 帶限

Example 2-6

cv::pyrDown()

#include <opencv2/opencv.hpp>

int main(int argc, char** argv) {

cv::Mat img1, img2;

cv::namedWindow("Example1", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Example2", cv::WINDOW_AUTOSIZE);

img1 = cv::imread(argv[1]);

cv::imshow("Example1", img1);

cv::pyrDown(img1, img2);

cv::imshow("Example2", img2);

cv::waitKey(0);

return 0;

};邊緣檢測

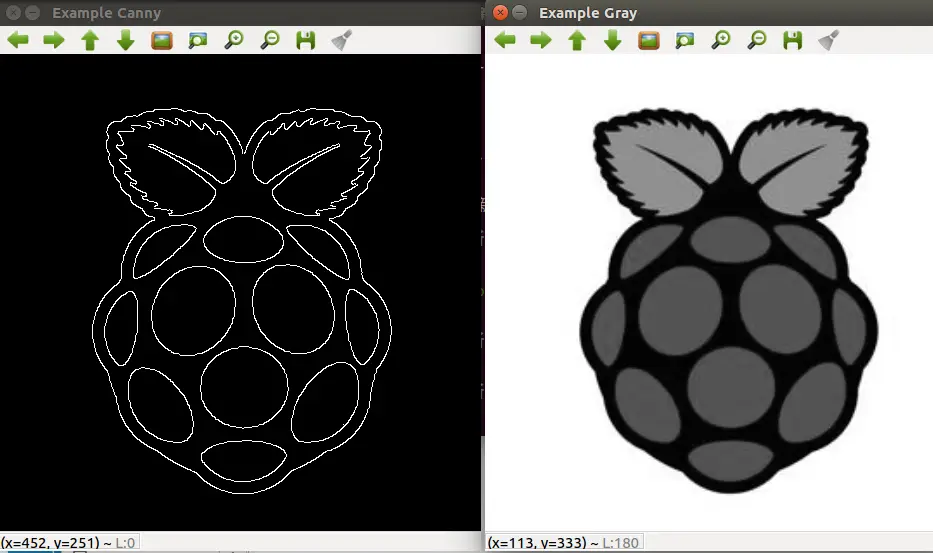

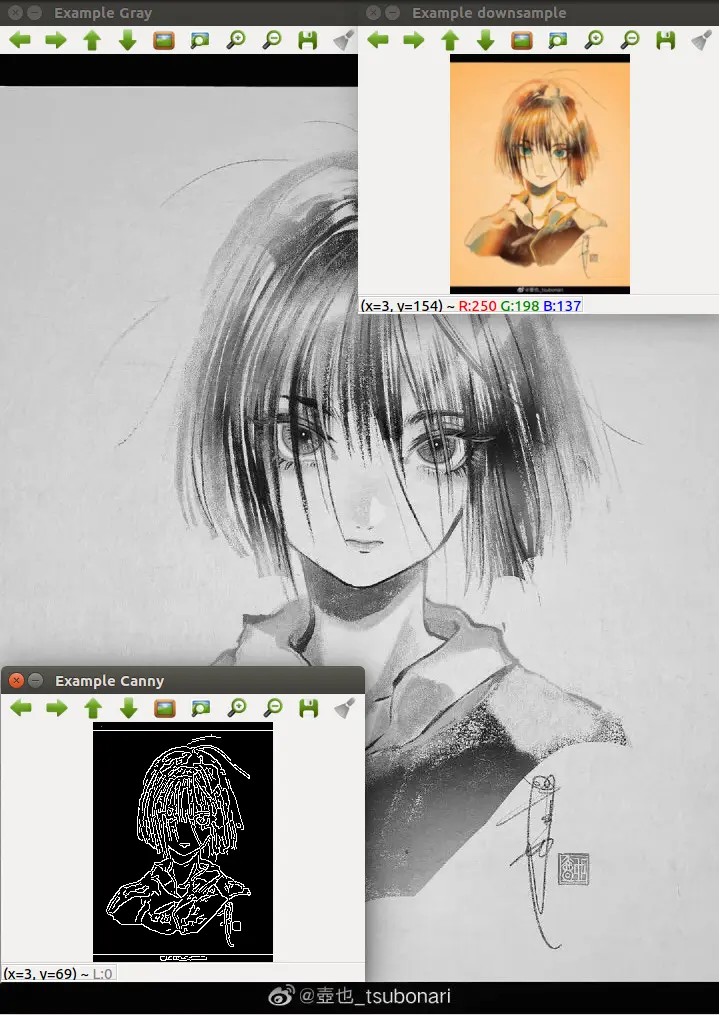

Example2-7

- Cannyedge detector Cannyedge邊緣檢測

把圖片轉換成灰度圖,單通道

cv::Canny()

cv::cvtColor() 將(BGR) 圖片轉換為灰度圖

cv::COLOR_BGR2GRAY

#include <opencv2/opencv.hpp>

int main( int argc, char** argv ) {

cv::Mat img_rgb, img_gry, img_cny;

cv::namedWindow( "Example Gray", cv::WINDOW_AUTOSIZE );

cv::namedWindow( "Example Canny", cv::WINDOW_AUTOSIZE );

img_rgb = cv::imread( argv[1] );

cv::cvtColor( img_rgb, img_gry, cv::COLOR_BGR2GRAY);

cv::imshow( "Example Gray", img_gry );

cv::Canny( img_gry, img_cny, 10, 100, 3, true );

cv::imshow( "Example Canny", img_cny );

cv::waitKey(0);

}Example2-8

下采樣兩次再Canny subroutine in a simple image pipeline

#include <opencv2/opencv.hpp>

int main(int argc, char** argv) {

cv::Mat img_rgb, img_gry, img_cny, img_pyr, img_pyr2;

cv::namedWindow("Example Gray", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Example Canny", cv::WINDOW_AUTOSIZE);

img_rgb = cv::imread(argv[1]);

cv::cvtColor(img_rgb, img_gry, cv::COLOR_BGR2GRAY);

cv::pyrDown(img_gry, img_pyr);

cv::pyrDown(img_pyr, img_pyr2);

cv::imshow("Example Gray", img_gry);

cv::Canny(img_pyr2, img_cny, 10, 100, 3, true);

cv::imshow("Example Canny", img_cny);

cv::waitKey(0);

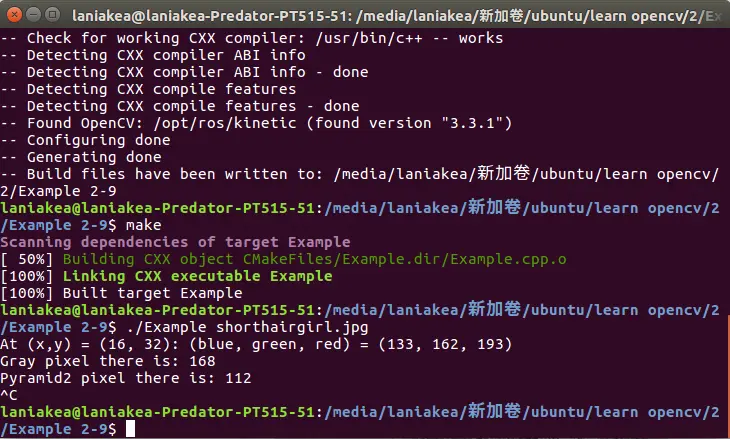

}Example 2-9

#include <opencv2/opencv.hpp>

int main(int argc, char** argv) {

int x = 16, y = 32;

cv::Mat img_rgb, img_gry, img_cny, img_pyr, img_pyr2,img_pyr3;

cv::namedWindow("Example Gray", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Example Canny", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Example downsample", cv::WINDOW_AUTOSIZE);

img_rgb = cv::imread(argv[1]);

cv::Vec3b intensity = img_rgb.at< cv::Vec3b >(y, x);

uchar blue = intensity[0];

uchar green = intensity[1];

uchar red = intensity[2];

std::cout << "At (x,y) = (" << x << ", " << y <<

"): (blue, green, red) = (" <<

(unsigned int)blue <<

", " << (unsigned int)green << ", " <<

(unsigned int)red << ")" << std::endl;

cv::cvtColor(img_rgb, img_gry, cv::COLOR_BGR2GRAY);

std::cout << "Gray pixel there is: " <<

(unsigned int)img_gry.at<uchar>(y, x) << std::endl;

cv::pyrDown(img_gry, img_pyr);

cv::pyrDown(img_pyr, img_pyr2);

cv::imshow("Example Gray", img_gry);

cv::Canny(img_pyr2, img_cny, 10, 100, 3, true);

x /= 4; y /= 4;

std::cout << "Pyramid2 pixel there is: " <<

(unsigned int)img_pyr2.at<uchar>(y, x) << std::endl;

img_cny.at<uchar>(x, y) = 128; // Set the Canny pixel there to 128

cv::pyrDown(img_rgb, img_pyr3);

cv::pyrDown(img_pyr3, img_pyr3);

cv::imshow("Example downsample", img_pyr3);

cv::imshow("Example Canny", img_cny);

cv::waitKey(0);

}這個圖我覺得挺好看,如果侵權我就刪了

從攝像頭讀入

analogous to 類同

Example 2-10

#include <opencv2/opencv.hpp>

#include <iostream>

int main(int argc, char* argv[]) {

cv::namedWindow("Example2_11", cv::WINDOW_AUTOSIZE);

cv::namedWindow("Log_Polar", cv::WINDOW_AUTOSIZE);

// ( Note: could capture from a camera by giving a camera id as an int.)

//

cv::VideoCapture capture(argv[1]);

double fps = capture.get(cv::CAP_PROP_FPS);//每秒讀一幀?

cv::Size size(

(int)capture.get(cv::CAP_PROP_FRAME_WIDTH),

(int)capture.get(cv::CAP_PROP_FRAME_HEIGHT)

);

//convert the frame to log-polar format

cv::VideoWriter writer;

writer.open(argv[2]//新文件的名字

, CV_FOURCC('M', 'J', 'P', 'G')//視頻編碼形式

, fps//重播幀率

, size//圖片大小

);

cv::Mat logpolar_frame, bgr_frame;

for (;;) {

capture >> bgr_frame;

if (bgr_frame.empty()) break; // end if done

cv::imshow("Example2_11", bgr_frame);

cv::logPolar(

bgr_frame, // Input color frame

logpolar_frame, // Output log-polar frame

cv::Point2f( // Centerpoint for log-polar transformation

bgr_frame.cols / 2, // x

bgr_frame.rows / 2 // y

),

40, // Magnitude (scale parameter)

cv::WARP_FILL_OUTLIERS // Fill outliers with 'zero'

);

cv::imshow("Log_Polar", logpolar_frame);

writer << logpolar_frame;

char c = cv::waitKey(10);

if (c == 27) break; // allow the user to break out

}

capture.release();

}argument 參數

replay frame rate 重播幀率