目錄

一、PBHC源碼下載

二、創建虛擬環境

三、安裝 Isaac Gym

四、安裝 rsl_rl

五、安裝Unitree 強化學習相關庫

5.1 安裝unitree_rl_gym

5.2 unitree_sdk2py(可選)

六、強化學習訓練

6.1 訓練命令

6.2 效果演示 Play

6.3 Mujuco 仿真驗證

在此記錄整個復現過程

一、PBHC源碼下載

首先下載源碼:

git clone https://github.com/TeleHuman/PBHC.git

二、創建虛擬環境

# 創建 conda虛擬環境

conda create -n unitree-rl python=3.8

# 激活虛擬環境

conda activate unitree-rl# 安裝 PyTorch

conda install pytorch==2.3.1 torchvision==0.18.1 torchaudio==2.3.1 pytorch-cuda -c pytorch -c nvidia或者使用

pip install torch==2.3.1 torchvision==0.18.1 torchaudio==2.3.1 --index-url https://download.pytorch.org/whl/cu118

三、安裝 Isaac Gym

3.1下載

Isaac Gym 是 Nvidia 提供的剛體仿真和訓練框架。首先,先在 Nvidia 官網

3.2解壓

tar -zxvf IsaacGym_Preview_4_Package.tar.gz

cd isaacgym/python

pip install -e .

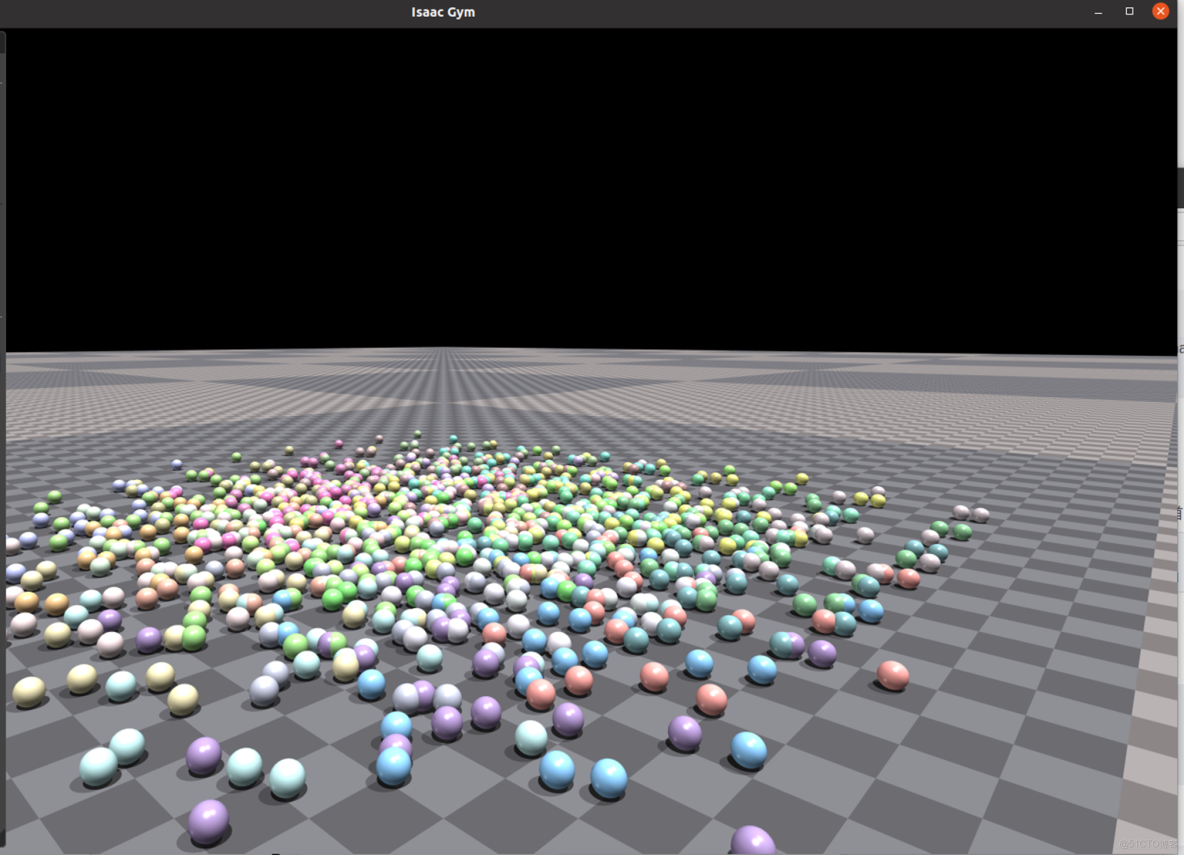

3.3驗證安裝

cd examples

python 1080_balls_of_solitude.py

四、安裝 rsl_rl

# 1. 克隆倉庫

git clone https://github.com/leggedrobotics/rsl_rl.git -b v1.0.2# 2. 安裝

cd rsl_rl

pip install -e .

五、安裝Unitree 強化學習相關庫

5.1 安裝unitree_rl_gym

git clone https://github.com/unitreerobotics/unitree_rl_gym.git

cd unitree_rl_gym

pip install -e .

5.2 unitree_sdk2py(可選)

unitree_sdk2py 是用於與真實機器人通信的庫。如果需要將訓練的模型部署到物理機器人上運行,可以安裝此庫。

git clone https://github.com/unitreerobotics/unitree_sdk2_python.git

cd unitree_sdk2_python

pip install -e .

六、強化學習訓練

6.1 訓練命令

python legged_gym/scripts/train.py --task=g1 --headless

!!!訓練到9000次左右報錯,可參考如下鏈接解決:

https://github.com/unitreerobotics/unitree_rl_gym/issues/69

https://github.com/leggedrobotics/rsl_rl/pull/12/files

具體操作為在rsl_rl/utils/utils.py中增加和刪除對應的代碼:

trajectory_lengths_list = trajectory_lengths.tolist()

# Extract the individual trajectories

trajectories = torch.split(tensor.transpose(1, 0).flatten(0, 1),trajectory_lengths_list)

# add at least one full length trajectory

trajectories = trajectories + (torch.zeros(tensor.shape[0], tensor.shape[-1], device=tensor.device), )

# pad the trajectories to the length of the longest trajectory

padded_trajectories = torch.nn.utils.rnn.pad_sequence(trajectories)

# remove the added tensor

padded_trajectories = padded_trajectories[:, :-1]

trajectory_masks = trajectory_lengths > torch.arange(0, tensor.shape[0], device=tensor.device).unsqueeze(1)

trajectory_masks = trajectory_lengths > torch.arange(0, padded_trajectories.shape[0], device=tensor.device).unsqueeze(1)

return padded_trajectories, trajectory_masks

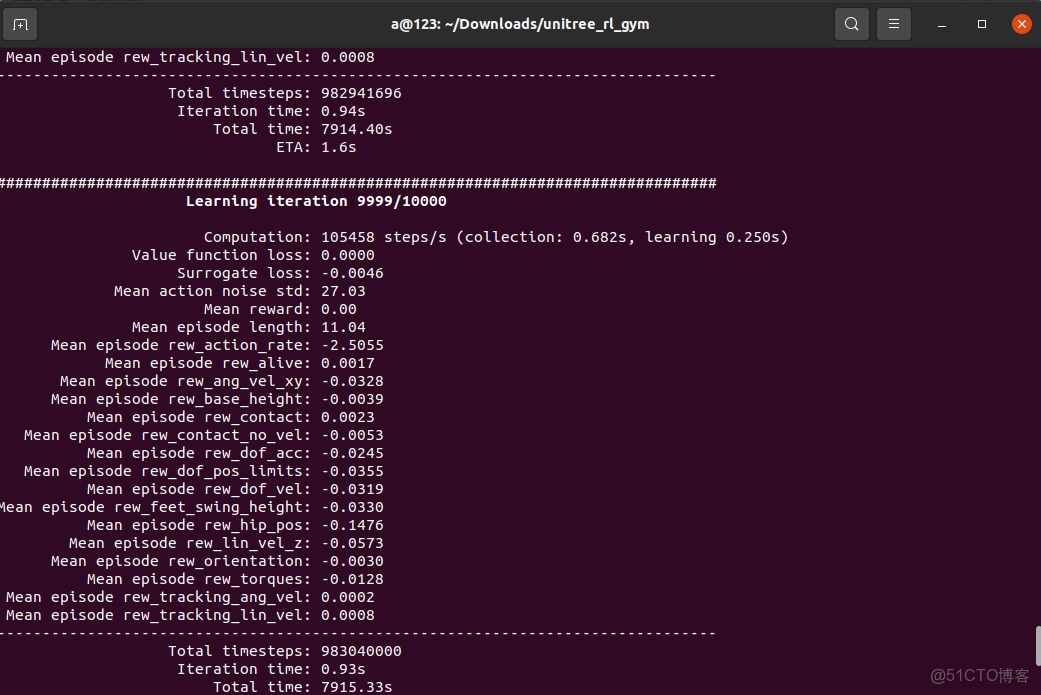

def unpad_trajectories(trajectories, masks):訓練結果如下:

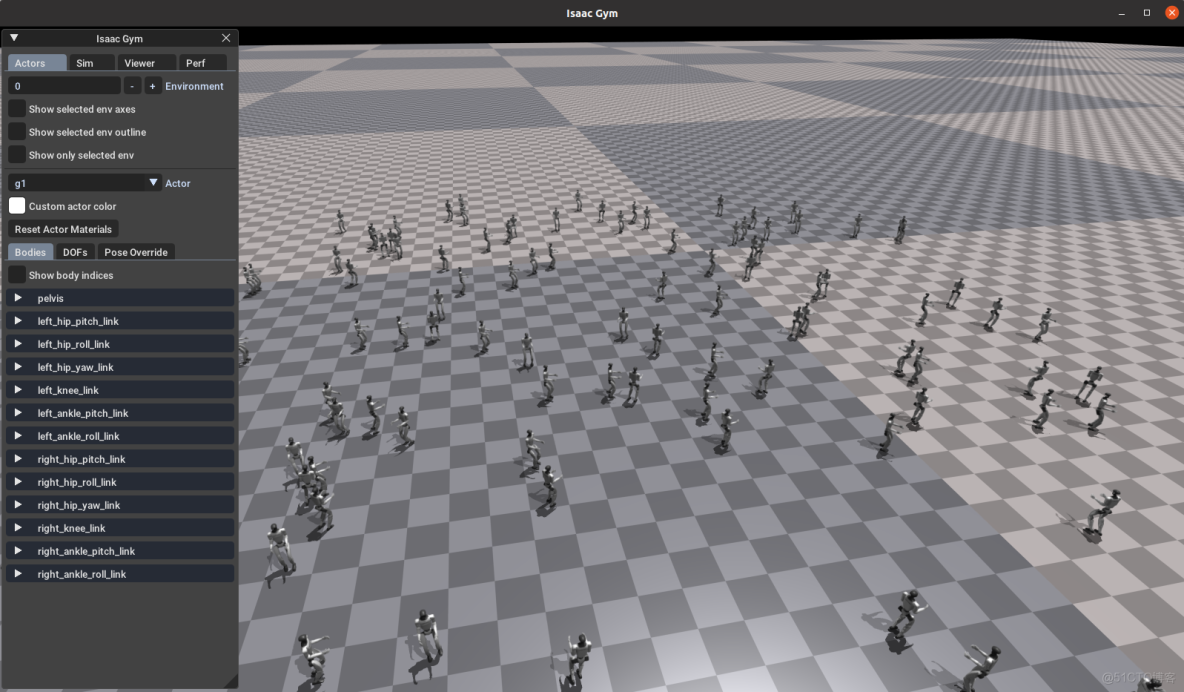

6.2 效果演示 Play

在 Gym 中查看訓練效果,可以運行以下命令:

python legged_gym/scripts/play.py --task=g1

# 生成僅一個模型

python legged_gym/scripts/play.py --task=g1 --num_envs=1

#使用指定路徑下模型進行測試(load_run不需要帶logs地址)

python legged_gym/scripts/play.py --task=g1 --num_envs=1 --load_run=Oct10_03-50-19_# 使用指定路徑下 指定模型進行測試(load_run不需要帶logs地址)

python legged_gym/scripts/play.py --task=g1 --num_envs=1 --load_run=Oct10_03-50-19_ --checkpoint=5000

結果如下:

!!!實際訓練到7000次就可以了,7000次左右以後的結果開始不正常

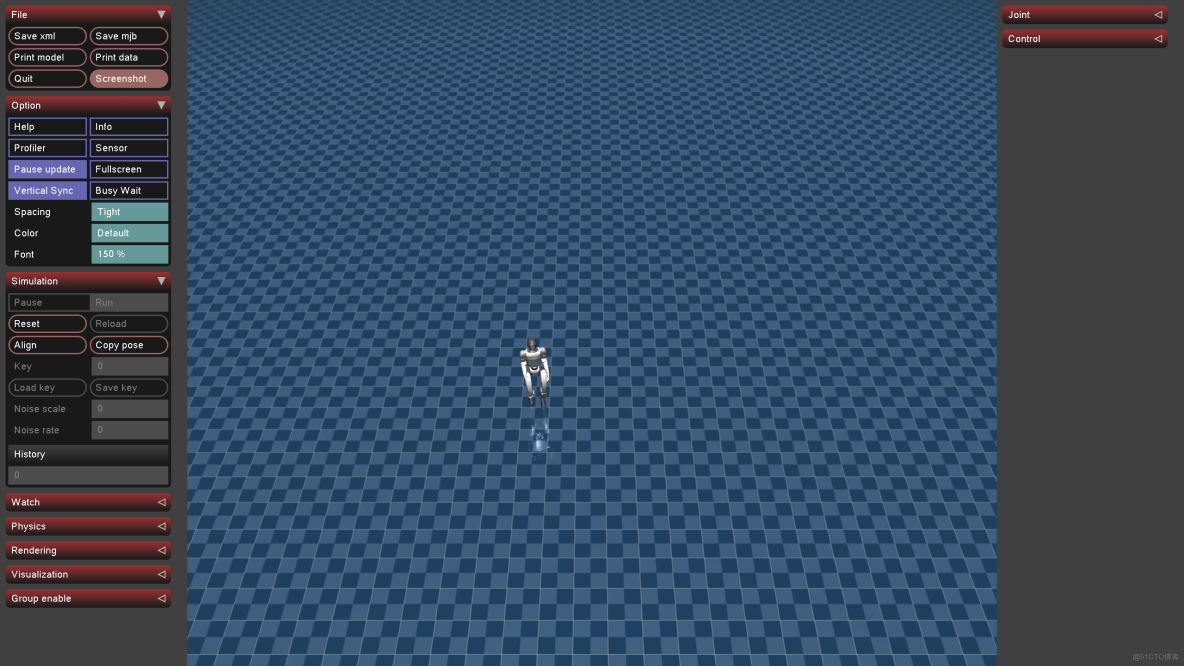

6.3 Mujuco 仿真驗證

python deploy/deploy_mujoco/deploy_mujoco.py g1.yaml

修改g1.yaml文件中的(policy_path):

#policy_path: "{LEGGED_GYM_ROOT_DIR}/deploy/pre_train/g1/motion.pt"

policy_path: "{LEGGED_GYM_ROOT_DIR}/logs/g1/exported/policies/policy_lstm_1.pt"

xml_path: "{LEGGED_GYM_ROOT_DIR}/resources/robots/g1_description/scene.xml"

# Total simulation time

simulation_duration: 60.0

# Simulation time step

simulation_dt: 0.002

# Controller update frequency (meets the requirement of simulation_dt * controll_decimation=0.02; 50Hz)

control_decimation: 10

kps: [100, 100, 100, 150, 40, 40, 100, 100, 100, 150, 40, 40]

kds: [2, 2, 2, 4, 2, 2, 2, 2, 2, 4, 2, 2]

default_angles: [-0.1, 0.0, 0.0, 0.3, -0.2, 0.0,

-0.1, 0.0, 0.0, 0.3, -0.2, 0.0]

ang_vel_scale: 0.25

dof_pos_scale: 1.0

dof_vel_scale: 0.05

action_scale: 0.25

cmd_scale: [2.0, 2.0, 0.25]

num_actions: 12

num_obs: 47

cmd_init: [0.5, 0, 0]結果還不錯: