Relation Transformer (RelTR), to directly predict a fixed-size set of <

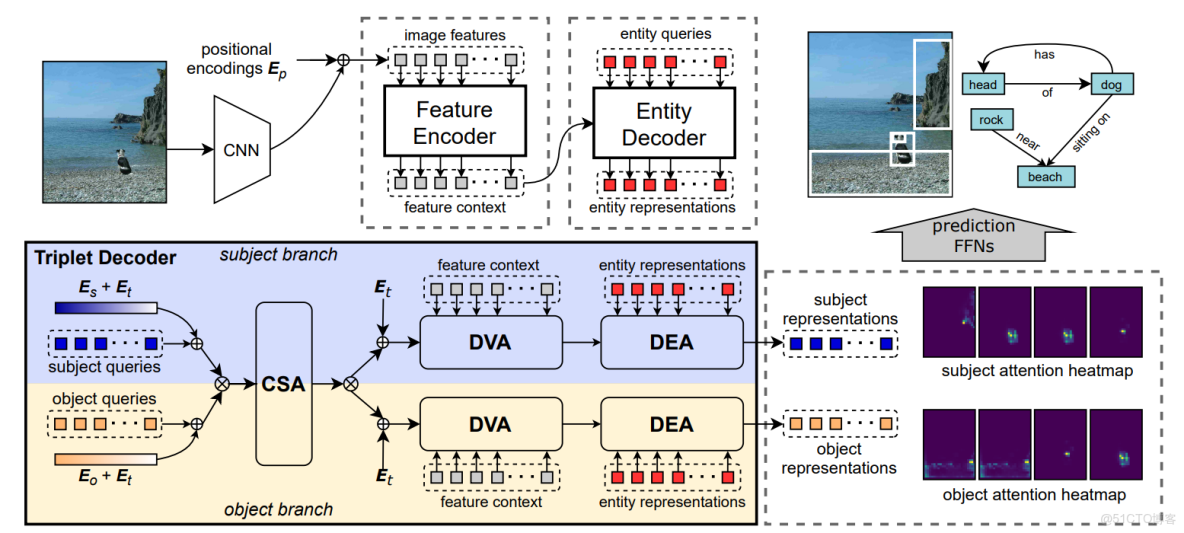

This entity detection framework is built upon the standard Transformer encoder-decoder architecture.

First, a CNN backbone generates a feature map

With the self-attention mechanism, the encoder computes a new feature context

The decoder transforms

(

RelTR has an encoder-decoder architecture, which directly predicts

The entity decoder capturing the entity representations from DETR,

The triplet decoder with the subject and object branches.

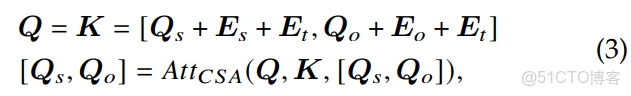

Given

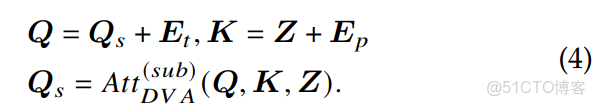

the triplet decoder layer reasons about the feature context

to directly output the information of

without inferring the possible predicates between all entity pairs.

即,我認為 the triplet decoder layer 輸入大概有:

- the feature context

- entity representations

- subject entity queries

- object entity queries

- subject encodings

- object encodings

- triplet encodings

其中,注意力機制,是計算所有元素兩兩之間的加權和,與位置、順序無關(輸出的順序可能不同),即,改變輸入序列中元素的順序,不會改變注意力層的輸出結果(嚴格來説是會改變輸出順序,但輸出集合的內容不變)

故而,引入了 triplet encoding、subject encoding、object encoding;

則,根據理解,與上圖對應的相關計算如下(CSA、DVA、DEA):

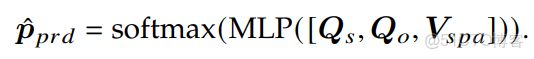

3.2.5 Final Inference

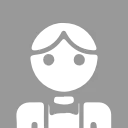

A complete triplet includes the predicate label and the class labels as well as the bounding box coordinates of the subject and object.

一個完整的三元組包含謂語標籤、主體與客體的類別標籤,以及它們的邊界框座標。

The subject representations

來自解碼器最後一層的主體表徵

We utilize two independent feed-forward networks with the same structure to predict the height, width, and normalized center coordinates of subject and object boxes.

我們採用兩個結構相同的獨立前饋網絡,分別預測主體和客體邊界框的高度、寬度及歸一化中心座標。

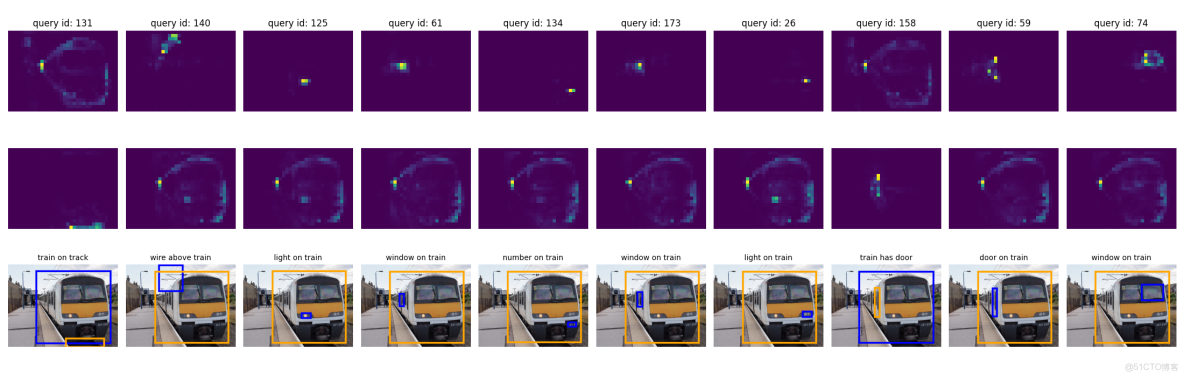

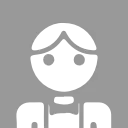

在DVA的時候,輸出了注意力熱圖,通過計算後,作為空間特徵