LLaMA-Factory

支持通過 WebUI 零代碼微調大語言模型

環境準備

|

系統

|

GPU型號

|

python 版本

|

|

rocky9.2

|

NVIDIA GeForce RTX 4090

|

python3.9

|

訓練模型

github 下載地址(框架)

將 框架下載下來之後,需要配置如下:

# 安裝一個虛擬環境

conda create -n py39 python=3.9 -y

進入到虛擬環境中

conda activate py39

需要安裝的模塊如下:

# 或者手動創建包含您列出的所有包

cat > requirements.txt << 'EOF'

accelerate==1.7.0

aiofiles==23.2.1

aiohttp==3.13.0

aioprometheus==23.12.0

airportsdata==20250909

annotated-types==0.7.0

antlr4-python3-runtime==4.9.3

anyio==4.11.0

astor==0.8.1

async-timeout==5.0.1

attrs==25.4.0

audioread==3.0.1

autoawq==0.2.9

av==15.1.0

blake3==1.0.7

cachetools==6.2.1

cbor2==5.7.0

certifi==2025.10.5

cffi==2.0.0

charset-normalizer==3.4.4

click==8.1.8

cloudpickle==3.1.1

compressed-tensors==0.9.3

contourpy==1.3.0

cupy-cuda12x==13.6.0

cycler==0.12.1

datasets==3.6.0

decorator==5.2.1

Deprecated==1.2.18

depyf==0.18.0

dill==0.3.8

diskcache==5.6.3

distro==1.9.0

dnspython==2.7.0

docstring_parser==0.17.0

einops==0.8.1

email-validator==2.3.0

et_xmlfile==2.0.0

eval_type_backport==0.2.2

exceptiongroup==1.3.0

fastapi==0.119.0

fastapi-cli==0.0.13

fastapi-cloud-cli==0.3.1

fastrlock==0.8.3

ffmpy==0.6.3

filelock==3.19.1

fire==0.7.1

fonttools==4.60.1

frozendict==2.4.6

frozenlist==1.8.0

fsspec==2025.3.0

gguf==0.17.1

googleapis-common-protos==1.70.0

gradio==4.44.1

gradio_client==1.3.0

grpcio==1.75.1

h11==0.16.0

hf-xet==1.1.10

httpcore==1.0.9

httptools==0.7.1

httpx==0.28.1

huggingface-hub==0.35.3

idna==3.11

importlib_metadata==8.0.0

importlib_resources==6.5.2

interegular==0.3.3

Jinja2==3.1.6

jiter==0.11.0

joblib==1.5.2

jsonschema==4.25.1

jsonschema-specifications==2025.9.1

kiwisolver==1.4.7

lark==1.2.2

lazy_loader==0.4

librosa==0.11.0

llamafactory==0.9.3

llguidance==0.7.30

llvmlite==0.43.0

lm-format-enforcer==0.10.12

markdown-it-py==3.0.0

MarkupSafe==2.1.5

matplotlib==3.9.4

mdurl==0.1.2

mistral_common==1.8.5

mpmath==1.3.0

msgpack==1.1.2

msgspec==0.19.0

multidict==6.7.0

multiprocess==0.70.16

nest-asyncio==1.6.0

networkx==3.2.1

ninja==1.13.0

numba==0.60.0

numpy==1.26.4

nvidia-cublas-cu12==12.4.5.8

nvidia-cuda-cupti-cu12==12.4.127

nvidia-cuda-nvrtc-cu12==12.4.127

nvidia-cuda-runtime-cu12==12.4.127

nvidia-cudnn-cu12==9.1.0.70

nvidia-cufft-cu12==11.2.1.3

nvidia-cufile-cu12==1.13.1.3

nvidia-curand-cu12==10.3.5.147

nvidia-cusolver-cu12==11.6.1.9

nvidia-cusparse-cu12==12.3.1.170

nvidia-cusparselt-cu12==0.6.2

nvidia-nccl-cu12==2.21.5

nvidia-nvjitlink-cu12==12.4.127

nvidia-nvtx-cu12==12.4.127

omegaconf==2.3.0

openai==2.3.0

openai-harmony==0.0.4

opencv-python-headless==4.11.0.86

openpyxl==3.1.5

opentelemetry-api==1.26.0

opentelemetry-exporter-otlp==1.26.0

opentelemetry-exporter-otlp-proto-common==1.26.0

opentelemetry-exporter-otlp-proto-grpc==1.26.0

opentelemetry-exporter-otlp-proto-http==1.26.0

opentelemetry-proto==1.26.0

opentelemetry-sdk==1.26.0

opentelemetry-semantic-conventions==0.47b0

opentelemetry-semantic-conventions-ai==0.4.13

orjson==3.11.3

outlines==0.1.11

outlines_core==0.1.26

packaging==25.0

pandas==2.3.3

partial-json-parser==0.2.1.1.post6

peft==0.15.2

pillow==10.4.0

platformdirs==4.4.0

pooch==1.8.2

prometheus_client==0.23.1

prometheus-fastapi-instrumentator==7.1.0

propcache==0.4.1

protobuf==4.25.8

psutil==7.1.0

py-cpuinfo==9.0.0

pyarrow==21.0.0

pybase64==1.4.2

pycountry==24.6.1

pycparser==2.23

pydantic==2.10.6

pydantic_core==2.27.2

pydantic-extra-types==2.10.6

pydub==0.25.1

Pygments==2.19.2

pynvml==11.5.0

pyparsing==3.2.5

python-dateutil==2.9.0.post0

python-dotenv==1.1.1

python-json-logger==4.0.0

python-multipart==0.0.20

pytz==2025.2

PyYAML==6.0.3

pyzmq==27.1.0

quantile-python==1.1

ray==2.50.0

referencing==0.36.2

regex==2025.9.18

requests==2.32.5

rich==14.2.0

rich-toolkit==0.15.1

rignore==0.7.0

rpds-py==0.27.1

ruff==0.14.0

safetensors==0.6.2

scikit-learn==1.6.1

scipy==1.13.1

semantic-version==2.10.0

sentencepiece==0.2.1

sentry-sdk==2.41.0

setproctitle==1.3.7

setuptools==65.5.1

shellingham==1.5.4

shtab==1.7.2

six==1.17.0

sniffio==1.3.1

soundfile==0.13.1

soxr==1.0.0

sse-starlette==3.0.2

starlette==0.48.0

sympy==1.13.1

termcolor==3.1.0

threadpoolctl==3.6.0

tiktoken==0.12.0

tokenizers==0.21.1

tomlkit==0.12.0

torch==2.6.0

torchaudio==2.6.0

torchvision==0.21.0

tqdm==4.67.1

transformers==4.52.4

triton==3.2.0

trl==0.9.6

typer==0.19.2

typing_extensions==4.15.0

typing-inspection==0.4.2

tyro==0.8.14

tzdata==2025.2

urllib3==2.5.0

uvicorn==0.37.0

uvloop==0.21.0

vllm==0.8.5.post1

watchfiles==1.1.0

websockets==12.0

wheel==0.38.4

wrapt==1.17.3

xformers==0.0.29.post2

xgrammar==0.1.18

xlrd==2.0.2

xxhash==3.6.0

yarl==1.22.0

zipp==3.23.0

zstandard==0.25.0

EOF

# 使用清華源加速

pip install -r requirements.txt -i https://pypi.tuna.tsinghua.edu.cn/simple/

# 如果遇到依賴衝突,可以嘗試

pip install -r requirements.txt --no-deps -i https://pypi.tuna.tsinghua.edu.cn/simple/

如果還有依賴報錯

# 徹底清理並讓pip重新解決所有依賴

pip uninstall opencv-python-headless datasets fsspec numpy -y

# 重新安裝,讓pip自動解決依賴版本

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple \

llamafactory \

opencv-python-headless

然後運行程序,使用第一塊顯卡

export CUDA_VISIBLE_DEVICES=0 && llamafactory-cli webui

運行起來應該是一個 7860 端口

http://192.168.3.166:7860/指定模型

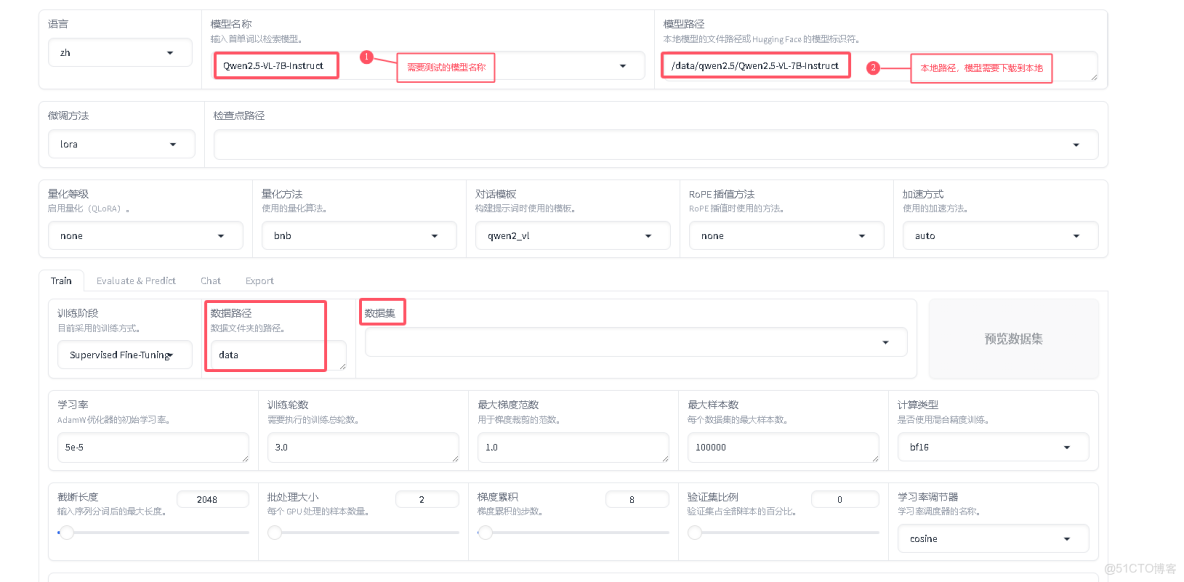

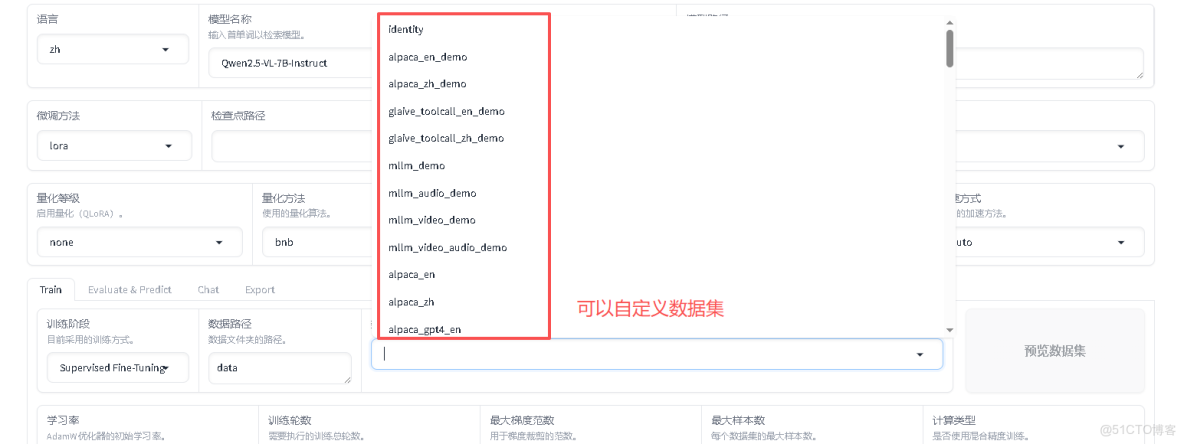

注意,模型不能是量化版,因為後續需要將訓練好的模型,合併一下。(合併不支持量化版模型)如果自定義數據集,需要修改以下兩個地方

(llamafactory-py39) [root@gpu-node-166 data]# cd LLaMA-Factory/data

vim bin.json # 新增模版文件

[

{

"instruction": "請對以下進行分類,分類選項包括:建材、裝飾、其他",

"input": "標題:關於通知\n正文:根據工作安排,現將相關事項通知如下。",

"output": "建材"

},

{

"instruction": "請對以下進行分類,分類選項包括:建材、裝飾、其他",

"input": "標題:關於通知\n正文:根據工作安排,現將相關事項通知如下,具體詳情請參閲附件。",

"output": "裝飾"

}

]

(llamafactory-py39) [root@gpu-node-166 data]# cat dataset_info.json

"adgen_local": { ## 這個名稱就是自定義數據集名稱

"file_name": "bid.json", ## 這個自定義模版文件路徑

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output"

}

}

}修改好後,需要重啓LLaMA-Factory 程序。

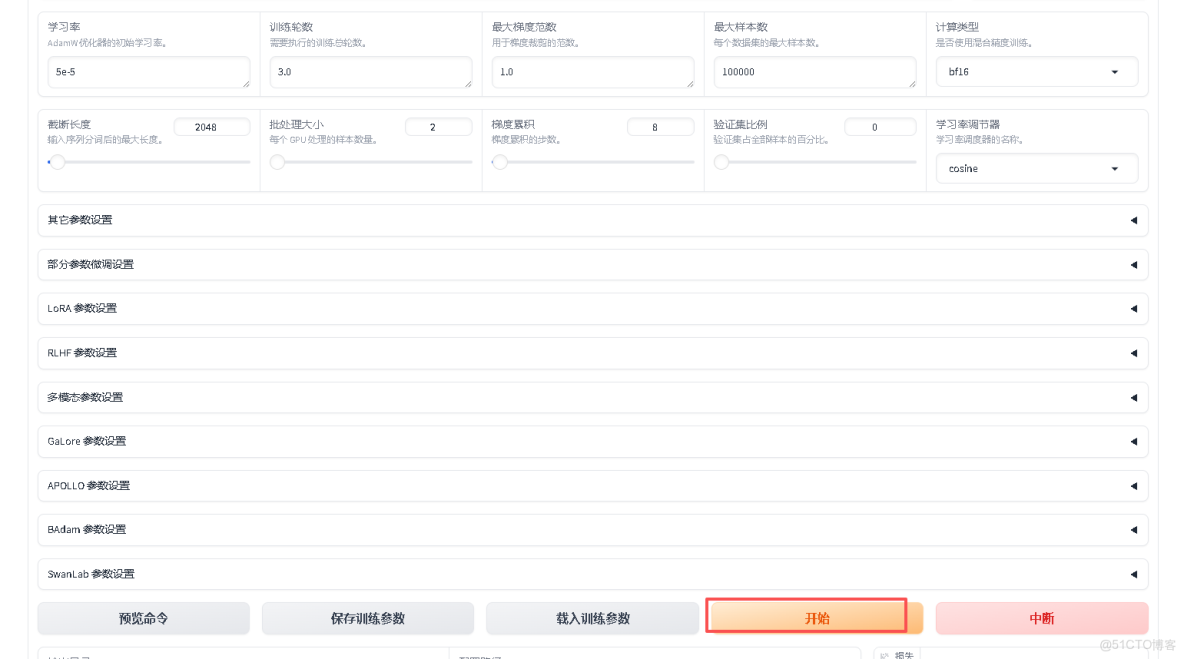

然後開始訓練

等數據跑完之後,會在當前目錄下生成一個路徑文件,裏面存放的就是訓練好的模型

(llamafactory-py39) [root@gpu-node-166 data]# ls saves/Qwen1.5-7B-Chat/lora/

train_2025-10-17-16-12-17

(llamafactory-py39) [root@gpu-node-166 data]# ls saves/Qwen1.5-7B-Chat/lora/train_2025-10-17-16-12-17/

adapter_config.json checkpoint-100 checkpoint-300 checkpoint-800 README.md trainer_log.jsonl train_results.json

adapter_model.safetensors checkpoint-1000 checkpoint-400 checkpoint-900 running_log.txt trainer_state.json video_preprocessor_config.json

added_tokens.json checkpoint-1100 checkpoint-500 llamaboard_config.yaml special_tokens_map.json training_args.bin vocab.json

all_results.json checkpoint-1191 checkpoint-600 merges.txt tokenizer_config.json training_args.yaml

chat_template.jinja checkpoint-200 checkpoint-700 preprocessor_config.json tokenizer.json training_loss.png合併模型

# 編輯一個合併程序

(llamafactory-py39) [root@gpu-node-166 data]# vim llama3_q_lora.yaml

### Note: DO NOT use quantized model or quantization_bit when merging lora adapters

### model

# 註釋: 這個是本地存放原始模型路徑

model_name_or_path: /data/qwen1.5/Qwen1.5-7B-Chat

# 這是訓練後的模型存放路徑

adapter_name_or_path: saves/Qwen1.5-7B-Chat/lora/train_2025-10-17-16-12-17/

# 這個模型屬於 千問

template: qwen

finetuning_type: lora

### export

# 合併後的模型存放路徑

export_dir: models/qwen2.5-7b-chat-lora-merged

export_size: 2

export_device: cpu

export_legacy_format: false

#export_legacy_format: true

# 運行合併程序

(llamafactory-py39) [root@gpu-node-166 data]# llamafactory-cli export llama3_q_lora.yaml

# 合併後,程序存放在

(llamafactory-py39) [root@gpu-node-166 data]# ls models/qwen2.5-7b-chat-lora-merged

added_tokens.json merges.txt model-00004-of-00008.safetensors model-00008-of-00008.safetensors tokenizer_config.json

chat_template.jinja model-00001-of-00008.safetensors model-00005-of-00008.safetensors Modelfile tokenizer.json

config.json model-00002-of-00008.safetensors model-00006-of-00008.safetensors model.safetensors.index.json vocab.json

generation_config.json model-00003-of-00008.safetensors model-00007-of-00008.safetensors special_tokens_map.json然後使用合併後的模型跑數據。

vim batch_classify-2.py

import pandas as pd

import time

import logging

import os

from tqdm import tqdm

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

# --------------------------

# 基礎配置

# --------------------------

INPUT_EXCEL = "/data/qwen1.5/LLaMA-Factory/data/測試數據.xlsx"

OUTPUT_EXCEL = "/data/qwen1.5/LLaMA-Factory/data/分類結果.xlsx"

SHEET_NAME = "Sheet1"

DATA_COLUMN = "文本"

# --------------------------

# 模型配置

# --------------------------

MODEL_PATH = "/data/qwen1.5/lx/LLaMA-Factory/data/models/qwen2.5-7b-chat-lora-merged"

# --------------------------

# 分類規則

# --------------------------

BIDDING_CLASSIFICATION_RULES = """

你是專業的分類器,必須嚴格遵守以下規則:

1. 分類範圍:僅從以下3個固定類別中選擇,絕對不能輸出其他類別;

2. 類別列表(按優先級排序):

- 建材(最高優先級)

- 裝飾(最低優先級)

- 其他(僅當完全不符合以上2類時使用)

3. 輸出要求:只返回1個類別名稱,不附加任何解釋、標點或多餘文字。

"""

# --------------------------

# 系統配置

# --------------------------

DELAY_BETWEEN_REQUESTS = 0.1

MAX_RESPONSE_TOKENS = 10

MAX_INPUT_LENGTH = 1500

# 日誌配置

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s',

handlers=[logging.StreamHandler()]

)

# --------------------------

# 模型加載

# --------------------------

logging.info("正在加載模型...")

try:

tokenizer = AutoTokenizer.from_pretrained(

MODEL_PATH,

trust_remote_code=True,

local_files_only=True

)

model = AutoModelForCausalLM.from_pretrained(

MODEL_PATH,

device_map="auto",

torch_dtype=torch.float16,

trust_remote_code=True,

low_cpu_mem_usage=True,

local_files_only=True

)

logging.info("模型加載成功!")

except Exception as e:

logging.error(f"模型加載失敗: {e}")

exit(1)

# --------------------------

# 分類核心函數(修復版)

# --------------------------

def classify_text(text):

text = str(text).strip()

if len(text) > MAX_INPUT_LENGTH:

text = text[:MAX_INPUT_LENGTH] + "..."

logging.debug(f"文本過長已截斷:{text[:50]}...")

messages = [

{"role": "system", "content": BIDDING_CLASSIFICATION_RULES},

{"role": "user", "content": f"請對以下分類:{text}"}

]

try:

# 修復:正確處理 apply_chat_template 的返回值

formatted_prompt = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

# 確保 formatted_prompt 是字符串

if isinstance(formatted_prompt, list):

formatted_prompt = formatted_prompt[0] if formatted_prompt else ""

elif not isinstance(formatted_prompt, str):

formatted_prompt = str(formatted_prompt)

inputs = tokenizer(

formatted_prompt,

return_tensors="pt",

max_length=2048,

truncation=True

).to(model.device)

with torch.no_grad():

outputs = model.generate(

**inputs,

max_new_tokens=MAX_RESPONSE_TOKENS,

temperature=0.0,

do_sample=False,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=tokenizer.eos_token_id

)

response = tokenizer.decode(

outputs[0][len(inputs["input_ids"][0]):],

skip_special_tokens=True

).strip()

valid_classes = ["建材","裝飾", "其他"]

# 精確匹配類別

for cls in valid_classes:

if cls in response:

return cls

return "其他"

except Exception as e:

logging.error(f"處理失敗:{str(e)}")

return "其他"

# --------------------------

# 主流程

# --------------------------

def main():

if not os.path.exists(INPUT_EXCEL):

logging.error(f"找不到測試數據,請檢查路徑:{INPUT_EXCEL}")

return

try:

logging.info("開始讀取Excel文件...")

# 嘗試多種引擎

try:

df = pd.read_excel(INPUT_EXCEL, sheet_name=SHEET_NAME, engine='openpyxl')

except:

try:

df = pd.read_excel(INPUT_EXCEL, sheet_name=SHEET_NAME, engine='xlrd')

except:

# 如果都不行,嘗試自動選擇

df = pd.read_excel(INPUT_EXCEL, sheet_name=SHEET_NAME)

logging.info(f"成功讀取Excel,共{len(df)}行數據")

except Exception as e:

logging.error(f"讀取Excel失敗:{str(e)}")

return

if DATA_COLUMN not in df.columns:

logging.error(f"Excel中沒有'{DATA_COLUMN}'列,可用列:{df.columns.tolist()}")

return

df[DATA_COLUMN] = df[DATA_COLUMN].fillna("(空值)").astype(str)

texts = df[DATA_COLUMN].tolist()

results = []

# 測試模型

logging.info("測試模型分類能力...")

test_texts = [

"本項目因故廢除",

"XX公司XX公告",

"XX時間記錄表"

]

for test in test_texts:

result = classify_text(test)

logging.info(f"測試 '{test}' -> '{result}'")

logging.info(f"開始正式分類,共{len(texts)}條數據...")

# 創建臨時目錄

temp_dir = "/data/qwen1.5/LLaMA-Factory/data/tmp"

if not os.path.exists(temp_dir):

os.makedirs(temp_dir)

temp_excel_path = f"{temp_dir}/中間結果_彙總.xlsx"

# 批量處理

for i, text in enumerate(tqdm(texts, desc="分類進度")):

result = classify_text(text)

results.append(result)

# 每20條保存一次中間結果

if (i + 1) % 20 == 0:

temp_df = df.iloc[:i+1].copy()

temp_df['分類結果'] = results[:i+1]

try:

temp_df.to_excel(temp_excel_path, index=False, engine='openpyxl')

except:

temp_df.to_excel(temp_excel_path, index=False)

logging.info(f"已更新中間結果(累計處理{i+1}條)")

time.sleep(DELAY_BETWEEN_REQUESTS)

# 保存最終結果

df['分類結果'] = results

try:

df.to_excel(OUTPUT_EXCEL, index=False, engine='openpyxl')

logging.info(f"完成!結果保存至:{OUTPUT_EXCEL}")

# 統計分類結果

from collections import Counter

counter = Counter(results)

logging.info(f"分類統計: {dict(counter)}")

except Exception as e:

backup = "/data/qwen1.5/lx/LLaMA-Factory/data/分類結果_備份.csv"

df.to_csv(backup, index=False, encoding='utf-8-sig')

logging.error(f"保存Excel失敗,已備份至CSV:{backup},錯誤:{str(e)}")

if __name__ == "__main__":

main()(qwen-inference) [root@gpu-node-166 data]# python batch_classify-2.py

2025-10-17 17:48:02,878 - INFO - 正在加載模型...

`torch_dtype` is deprecated! Use `dtype` instead!

2025-10-17 17:48:03,927 - INFO - We will use 90% of the memory on device 0 for storing the model, and 10% for the buffer to avoid OOM. You can set `max_memory` in to a higher value to use more memory (at your own risk).

Loading checkpoint shards: 100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 8/8 [00:02<00:00, 3.61it/s]

2025-10-17 17:48:06,273 - WARNING - Some parameters are on the meta device because they were offloaded to the cpu.

2025-10-17 17:48:06,274 - INFO - 模型加載成功!

2025-10-17 17:48:06,274 - INFO - 開始讀取Excel文件...

2025-10-17 17:48:06,400 - INFO - 成功讀取Excel,共1588行數據

2025-10-17 17:48:06,401 - INFO - 測試模型分類能力...

The following generation flags are not valid and may be ignored: ['temperature', 'top_p', 'top_k']. Set `TRANSFORMERS_VERBOSITY=info` for more details.

2025-10-17 17:48:10,905 - INFO - 測試 '本項目因故廢除' -> 'XX公告'

2025-10-17 17:48:13,945 - INFO - 測試 'XX公司XX公告' -> 'XX公告'

2025-10-17 17:48:17,996 - INFO - 測試 'XX時間記錄表' -> 'XX記錄'

2025-10-17 17:48:17,996 - INFO - 開始正式分類,共1588條數據...

分類進度: 0%| |

分類進度: 0%| | 1/1588

分類進度: 0%|▏ |

分類進度: 1%|█▏ | 14/1588 [00:42<1:27:47, 3.35s/it]

分類進度: 1%|█▎ | 15/1588 [00:46<1:26:38, 3..30s/it]

分類進度: 1%|█▋ | 19/1588 [00:57<1:19:27, 3.04s/it]

2025-10-17 17:49:18,801 - INFO - 已更新中間結果(累計處理20條)

分類進度: 2%|███▍ | 39/1588 [01:57<1:16:59, 2.98s/it]

本文章為轉載內容,我們尊重原作者對文章享有的著作權。如有內容錯誤或侵權問題,歡迎原作者聯繫我們進行內容更正或刪除文章。